- Blog

- Install ant on mac brew

- Using ftp via browser

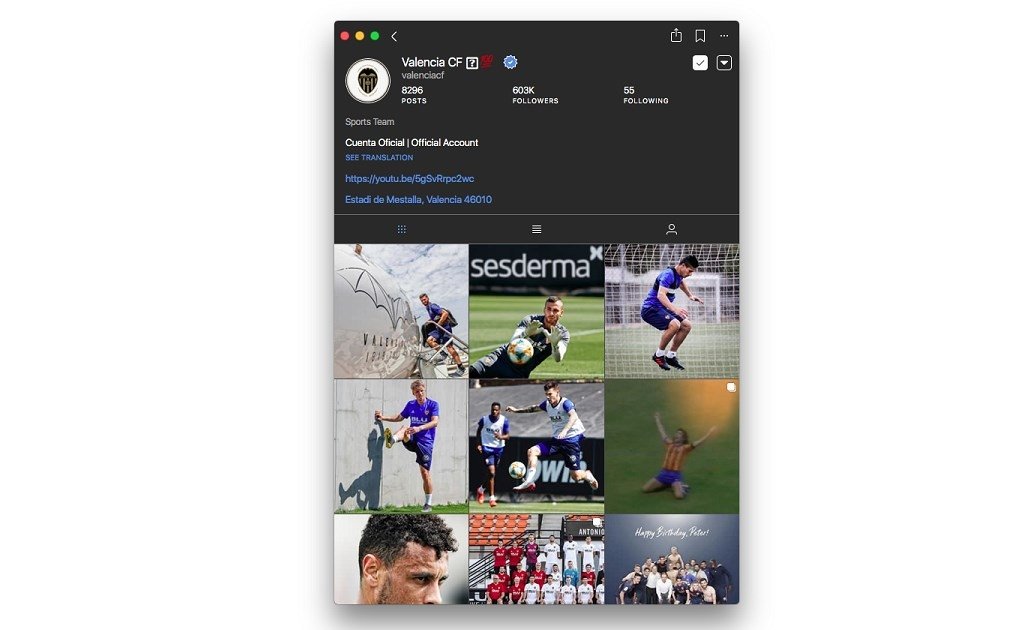

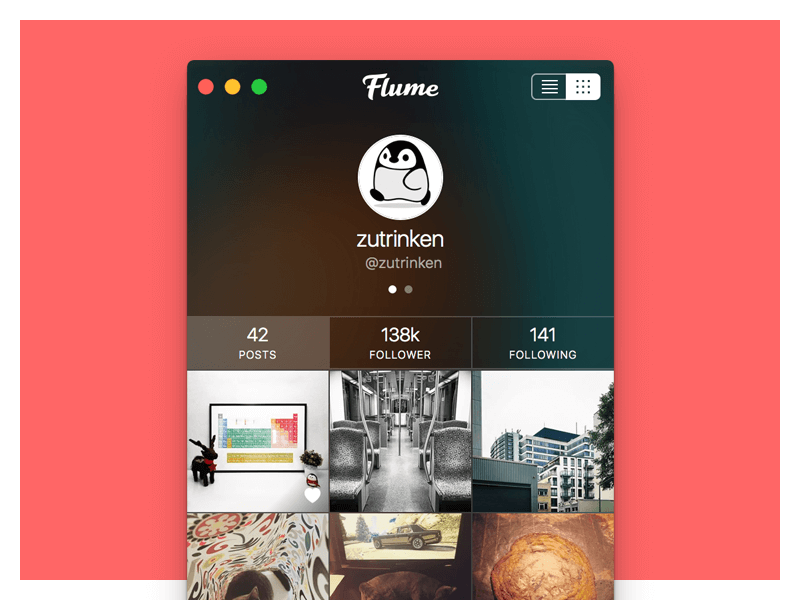

- Flume instagram software

- The witcher 3 wild hunt xbox one

- Tribal body paint fantasy

- Mcafee internet security suite verwijderen

- Quickbooks premier for mac download free

- Clip studio serial number store

- Secure vnc viewer mac os

- Best free text editor for mac

- Civilization beyond earth wiki combat rover

- Holdem bot 8-4-1

- Posi prox point of sales system

Last Updated JFlume, Inc., a Delaware corporation with corporate offices located at 75 Higuera Street, Suite 120, San Luis Obispo, CA 93401 (“Flume”), desires to offer certain hardware and software products, developed by and proprietary to Flume or its licensors. **NOTE: We use the word “Ingestion” here, ingesting data simply means toĪccept data from an outside source and store it in Hadoop. The main thing that makes this great chain of sources, channels, and sinks work is the Flume agent configuration, which is located in a local text file that’s structured similar to a Java properties file and we can configure multiple agents in the same file. In the context of Flume, compatibility is key : An Avro event needs an Avro source, for instance, and a sink should deliver events that are appropriate and suitable to the destination. Avro, which an Apache’s remote call-and-serialization framework, is the typical way of sending data across a network with Flume, since it serves as a useful utility tool for the efficient serialization and transformation of data into a compact binary format.

The data can be of any kind, but Flume is typically well-suited to handling log data, like the log data from web servers.

In other words, Flume is designed and engineered for the continuous ingestion** of data into HDFS. Some amount of data volume that ends up in HDFS might land there through database load operations or other types of batch processes, but what if we want to capture the data that’s flowing in high-throughput data streams, for example application log data? Apache Flume is the widely popular standard way to do that with ease, efficiently, and safely.Īpache Flume is a top-level project from the Apache Software Foundation, works as a distributed system for aggregating and moving massive amounts of streaming data from various sources to a centralized data store.